Syncing your data with Salesforce using IBM TDI

Recently some of my customers had the need of a solution which should sync their customer data from IBM Domino or SAP to Salesforce.

They don’t wanted to use another Cloud solution for the syncing but they already had IBM Tivoli Directory Integrator in place for userdata syncing between directories.

So here was the challenge, use IBM TDI to sync the data with Salesforce.

I never worked with Salesforce before so I did some digging and identified the Salesforce Bulk API as my prefered interface. As you know I’m not a developer so I prefered the interface which needed little developer knowledge.

Using the Bulk API is pretty simple:

- Create a new job that specifies the object and action. (query, insert, upsert…)

- Send data to the server in a number of batches. (CSV files)

- Once all data has been submitted, close the job. Once closed, no more batches can be sent as part of the job.

- Check status of all batches at a reasonable interval. Each status check returns the state of each batch.

- When all batches have either completed or failed, retrieve the result for each batch.

- Match the result sets with the original data set to determine which records failed and succeeded, and take appropriate action.

So in this case I had to do following.

- Create an assembly line to get a API token

- Create a job that exports Salesforce customer data to an intermediate Domino database

- Map this data to customer data from a current Domino database

- Create a CSV file with the mapped customer data

- Send this CSV to the Bulk API as upsert job (update&insert)

- Wait for the job completion and catch the result

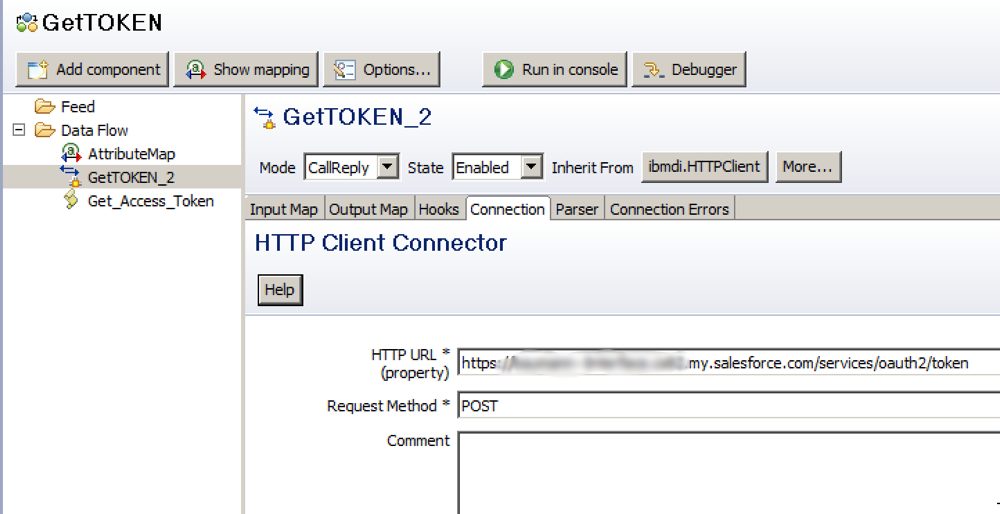

The hardest part of the work was the start, how do I get a token which I can use to send my jobs to the Bulk API?

I ended up using the HTTP Client connector in CallReply Mode. I used the http.bodyAsString reply and extracted the token with a javascript (Get_Access_Token).

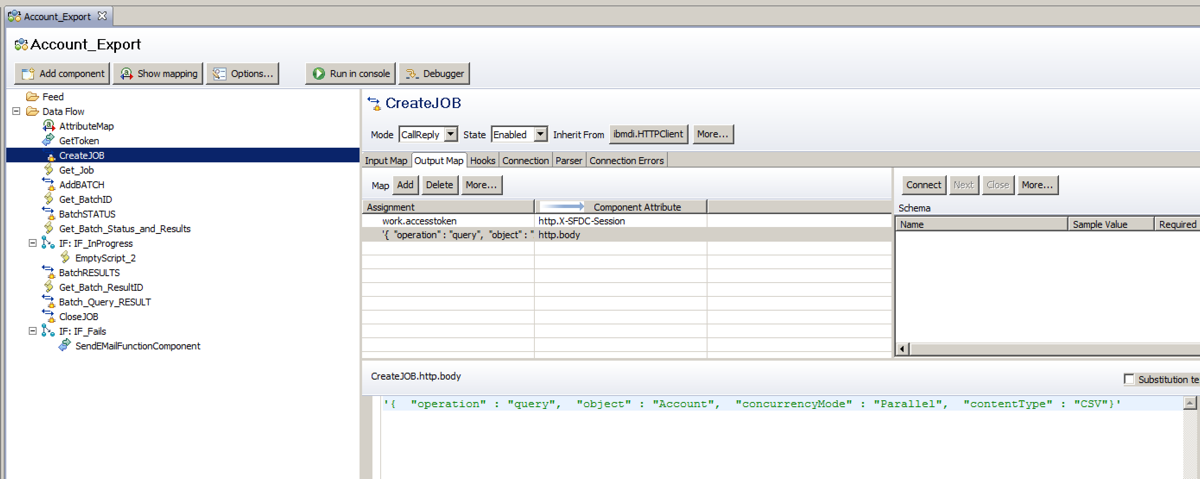

This access token can now be used for the Bulk API calls. This is the example how I export the data:

As you can see I first create the token and then Create the Job. Again the HTTP Client connector in CallReply Mode is used and I create an export Job for the Account object:

Once the job is created I have to catch the Job ID and use this ID for all the API calls. First I create the Batch/action which should be executed, in this case:

So I want to get results for this query on the Object Account. Next is to catch this Batch ID and use it to check the result of the batch run. At the end I close the job and save the result as CSV on the TDI server.

Next this data will be transferred to an intermediate Domino DB and will be used to map the data to the customer data which I already have. This could be done by calling the salesforce API directly but I prefered to use a Domino intermediate DB because the TDI Domino Connectors are much easier to use than the HTTP Client connector.

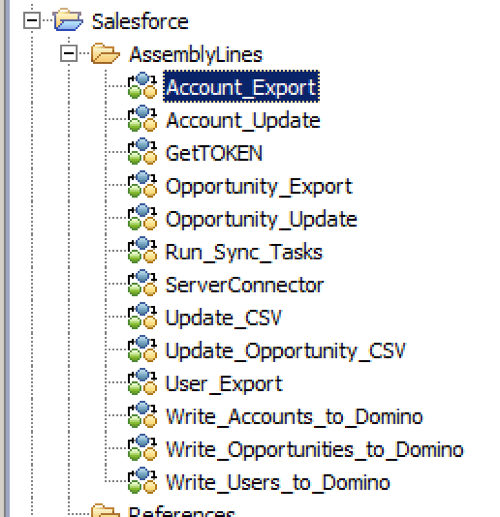

I ended up with some additional assembly lines as you can see here:

Not only customers are synced with the solution, we even sync opportunities. The solution was further enhanced by getting SAP data and sync this data through an intermediate Domino database with Salesforce.

As you can see TDI is very powerful even for syncing data through API’s and I recommend to use it whenever Domino is involved as data source.

Feel free to contact me if you are interested to know more about this solution, I’m happy to help.